Following the success with QuadForce 2450 modification (GeForce GTS450 -> Quadro 2000), I went on to investigate whether the same modification will work on the GTX470 to turn it into a Quadro 5000 and on a GTX480 to turn it into a Quadro 6000. Modifying a GTX580 into a somewhat obscure Quadro 7000 was also undertaken.

| MODEL | CORE CONFIGURATION | MEMORY CHANNELS | MEMORY |

|---|---|---|---|

| GeForce GTX470 | 448:56:40 | 5x | 1.25GB |

| GeForce GTX480 | 480:60:48 | 6x | 1.50GB |

| Quadro 5000 | 352:44:40 | 5x | 2.50GB |

| Quadro 6000 | 448:56:48 | 6x | 6.00GB |

In all three cases, the modifications were successful, and they all worked as expected – features like VGA passthrough work on the 5000 and 6000 models and gaming performance is excellent, as you would expect – I can play Crysis at 3840×2400 in a virtual machine. Again, the extra GL functions aren’t there (if you compare the output of glxinfo between a real Quadro and a QuadForce, you will find a number of GL primitives missing), so some aspects of OpenGL performance are still crippled. PhysX support is also a little hit-and-miss. In a VM, on Windows 7 it seems to work on Quadro cards; on XP it appears to not be working. On bare metal on Windows XP it works. This appears to be due to the Quadro driver itself, rather than due to the cards not being genuine Quadros.

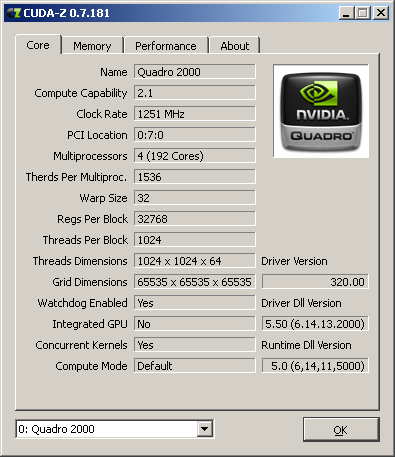

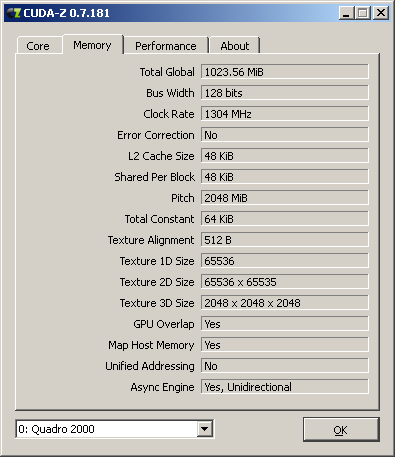

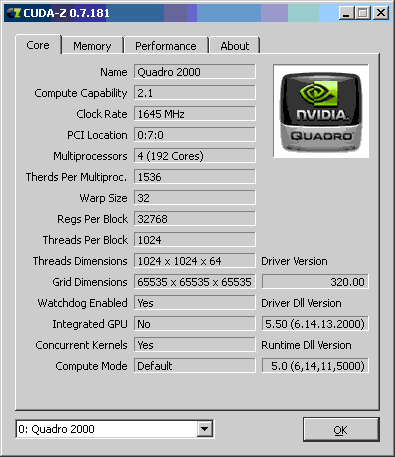

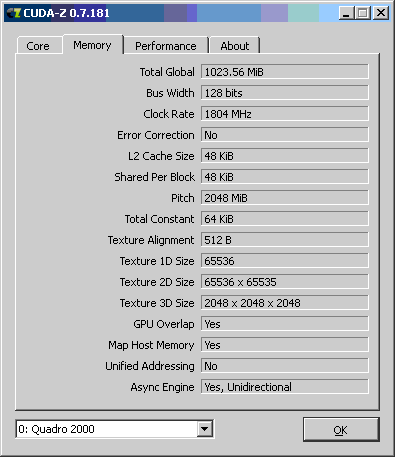

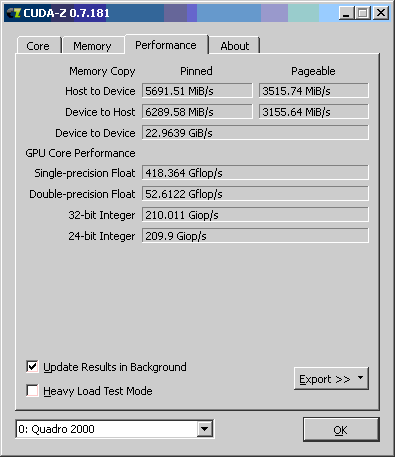

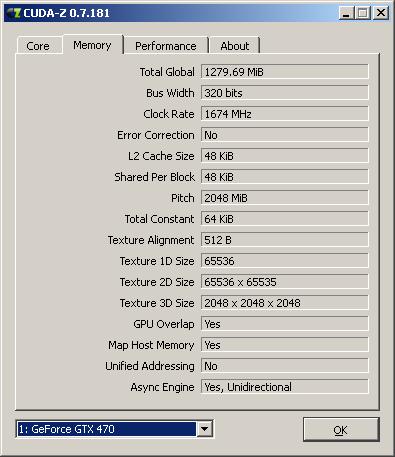

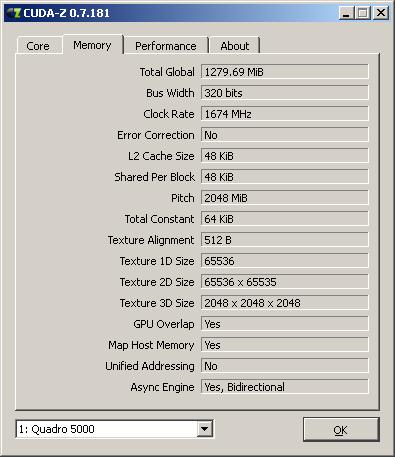

Finally, the GF100 based cards (GTX470/480) also get an extra feature enabled by the modification – second DMA channel. Normally there is a unidirectional DMA channel between the host and the card. Following the modification, the second DMA channel in the other direction is activated. This has a relatively moderate impact on gaming performance, but it can have a very large impact on performance of I/O bound number crunching applications since it increases the memory bandwidth between the card and the system memory (you can read and write to/from the GPU memory at the same time). Compare the CUDA-Z Memory report for the GTX470 before and after modifying it into a Quadro 5000 – GTX470 only has a unidirectional async memory engine, but after modifying it the engine becomes bidirectional:

The same happens on the GTX480 – it’s async engine also becomes bidirectional after modification.

Quadro 7000 is a little different from the other two. It doesn’t have dual DMA channels, and Nvidia don’t list it as MultiOS capable. The drivers do not do the necessary adjustments to make it work with VGA passthrough. That means that, unfortunately, the gain from modifying a GTX580 is questionable in terms of what you will gain. Note, however, that the Quadro 7000 was never aimed at the virtualization market; it was only available as a part of the QuadroPlex 7000 product – an external GPU enclosure designed for driving multiple monitors for various visualisation work. Hence the lack of MultiOS support on it.

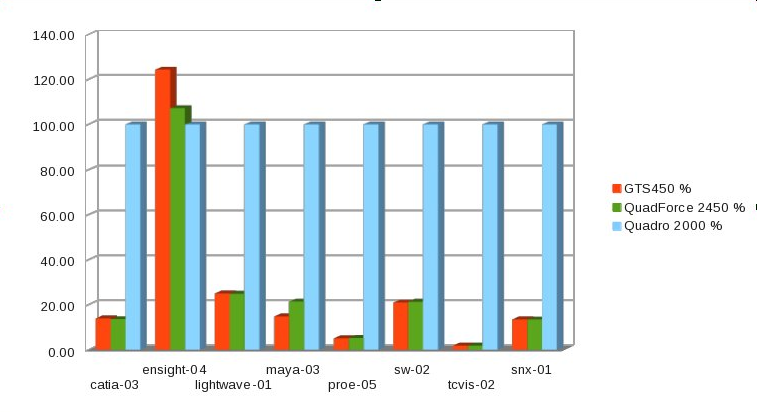

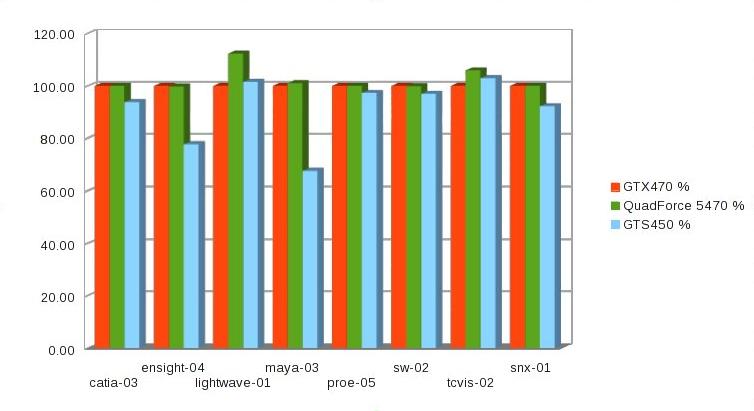

Here is how the QuadForce 5470 does in SPECviewperf (GTX470 = 100%):

Compared to the QuadForce 2450, the performance improvements are more modest – the only real difference is observable in the lightwave benchmark.

Unfortunately, my QuadForce 6480 is currently in use, so I cannot get measurements from it, but since the they are both based on the GF100 GPU, the results are expected to be very similar.

On the QuadForce 7580 there was no observed SPEC performance improvement.

I have since acquired a Kepler Based 4GB GTX680 and successfully modified it into Quadro K5000. Modifying it into a Grid K2 also works, but there don’t appear to be any obvious advantages from doing so at the moment (K5000 works fine for virtualization passthrough, even though it wasn’t listed as MultiOS last time I checked). This QuadForce K5680 is why my GTX470 became free for testing again. More on Quadrifying Keplers in the next article. I also have a GTX690 now (essentially two 680s on the same PCB), which will be replacing the QuadForce 6480, so this will also be written up in due time. Unfortunately, however, quadrifying Keplers in most cases requires some hardware as well as BIOS modifications. I will post more on all this soon, along with a tutorial on soft-modding.